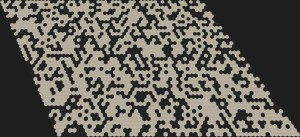

This is the first new post for a series of dungeon generator experiences. As you might expect I have a new game project (since February this year) which is based on a hex-gird. The game mechanic will be something between Hack-n-Slay and Pokémon, but more to that on a dedicated project page later.

First, there is an extremely valuable source for many algorithms appearing on a hex grid: http://www.redblobgames.com/grids/hexagons/ [1]. This side is one of the best web pages I have seen so far. It has very helpful explanations for all hex-grid problems and shows interactive examples for each of them. Moreover, if you change an option in one example, all others adapt to this same option. If you don't understand a detail in this post related to the hex-grid, just have a look on the mentioned side.

Generating Caves on Hex-Grids (Cellular Automaton)

There are many types of dungeons and generators, especially on quad-grids. You can have special maze generators, room based dungeons and caves. This post shows how to generate caves on the hex-grid.

Cellular automata seem to be the most common approach for generating caves. One source I found inspirational is this article [2]. However, I was not able to find sources on good hex-grid cave generator rules quickly, so I started experimenting and here are my results.

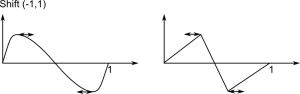

The basic idea is to start with a random initialization (floor/wall) and apply some iterations of a cellular automaton. This automaton will check the neighborhood of a cell and decide which type the cell gets in the next turn, based on the number of certain elements in the vicinity. Let  being the count of Floor (or Wall) tiles around a cell within a range of 1 excluding the tile itself. This count can be a number in

being the count of Floor (or Wall) tiles around a cell within a range of 1 excluding the tile itself. This count can be a number in  . Then, the following rules produced good cave maps:

. Then, the following rules produced good cave maps:

|

1 2 3 4 |

Init(F, 65%) // Set 65% of the tiles as floor and the others as wall repeat if (W && C(W,1)>=2) || (F && C(W,1)>=4) W else F |

One important thing is when and how the new type is set. Usually, an automaton would change all states at once, after the types of all cells are determined. Directly changing the cell type will also change the neighborhood of the next cell, which can lead to a fast collapse into a single type only. Luckily the above rule handles direct changes very well. So, yes I am changing types immediately...

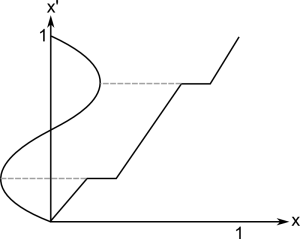

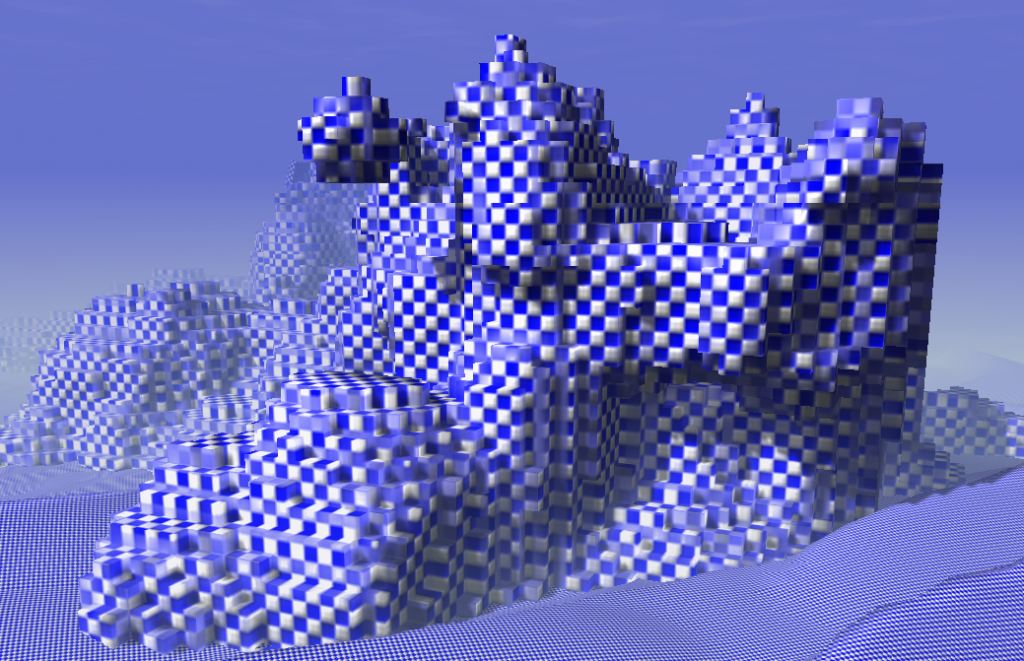

Here are two examples with different initialization conditions (60% and 65%), using the same seed. Each row shows the initialization and two iterations.

|

|

|

|

|

|

The base shape is determined by the simple fact that I loop over my memory in a rectangle fashion during initialization. It could easily be changed, but I see no need for it. Using the 65% initialization I did not see any caves with large disconnected components on several seeds. If a dungeon is too small it can thus be regenerated with a high success probability. However, even through there are only a few small disconnected components we want to remove them before proceeding with populating the dungeon. It would be a disaster if the boss is in another component and the only way out of a dungeon is killing that boss... Extracting a single component is simply by using a flood-fill.

To make sure we know a good start point, we can modify the initialization. For example, I set all seven tiles around position (0,0) to floor. This gives an area of high floor density which will lead to even more floor tiles in the automaton iteration. It is more likely that the region grows than that it shrinks.

Fractal Noise as Generator

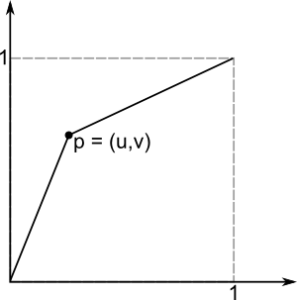

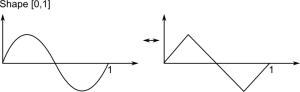

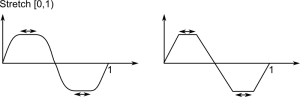

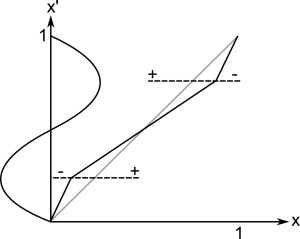

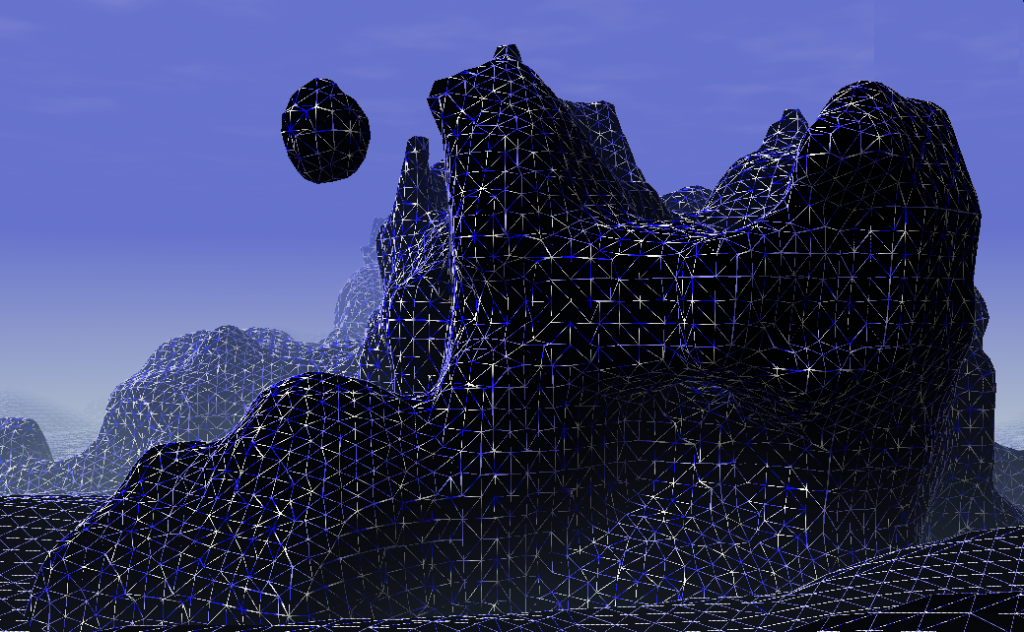

While the above rule is relatively stable, there are no real options to tweak the scale, ... Instead of trying to resample the map as done by [2] to get larger caves I switched to another idea: Generating a fractal noise (Value Noise or Perlin Noise) and using a threshold to determine occupied tiles. Assuming the fractal noise is in [0,1] a threshold of 0.5 should give roughly 50% occupancy. There are several degrees of freedom:

- The threshold: steers how much of the map is occupied (sparsity)

- The number of octaves: large scale vs. small scale structures

- The turbulence function (adding octaves, multiplying, ridged (abs), ...): different shapes

As noise function I used a Perlin Noise with ridged turbulence function. Unfortunately, any noise results in very straight boundaries at the edge of the generation area. Therefore, I gradually increase the threshold to the outer boundary (quadratic over radius of 10 tiles) up to 1.

Of course, there is now guarantee for connected components, but this is the same as for the cellular automaton. I decided to fix this problem with a general approach described in the next section. But, first here some examples of the generator output. The images have increasing threshold levels which reduces the number of non-empty tiles and increases the chance for non-connected components. The green connections are created by the algorithm below.

|

|

|

|

Reconnecting Components

Rather than recreating dungeons until they have the right size by accident it is better to somehow increase connectivity. Starting with some known (e.g. (0,0) start position) or a random floor tile one can mark all connected tiles with a flood fill. Then, another unmarked tile can be searched and connected with a randomized work between the start region and the obviously disconnected region. I use an A* way search where empty tiles have a random high cost in [5,20] (fixed for the time of search) and floor tiles have a low constant cost [0.01]. This leads to a non-straight connection between probably close points of the two sets. After connecting the two regions the new part can be marked the same way as the start region (it now belongs to it). When using a simple scan-line as search, all disconnected components will be connected finally.

[1] http://www.redblobgames.com/grids/hexagons/

[2] http://www.roguebasin.com/index.php?title=Cellular_Automata_Method_for_Generating_Random_Cave-Like_Levels